« ... At these words, the Raven was overjoyed ;

And to show off his fine voice, He opened his wide beak and dropped his prey. The Fox seized it and said: ‘My good sir, Know that every flatterer Lives at the expense of the one who listens to him. » Jean de La Fontaine. |

Artificial

Intelligence.

From information to disinformation.

Preamble :

1 - The different types of AI :

2 - Who controls AI ?

3 - From design to use :

A – Open and closed models :

B – Main types of AI :

4 - The intended impact on individuals and societies :

A - AI in our lives :

B - Effects on the functioning and structure of the brain :

C - Imitation : the strength and weakness of our brain :

D - Society under influence :

a – The impact on education :

b – The impact on creativity :

c – Actual AI outputs :

E - The downsides :

5 - When did all this begin ?

A - From animals to humans :

B - The information age :

C - The beginnings of disinformation :

a – Artificial intelligence and social media today :

b - From information to commercial advertising :

6 - What solutions can be found ?

7 – How to escape the pernicious influence of AI :

A - Through research :

B - Through play :

Conclusion :

Today, a great deal has changed.

There is no longer any need to search for sources of knowledge: everyone has access to a loyal and patient assistant – in this case, Artificial Intelligence – to obtain all the information they desire, and the most satisfactory answers possible.

It is even unnecessary to choose; AI chooses the answer for us.

But are the suggested sources reliable ?

Is AI the guiding force capable of sparing us all effort and always delivering the truth? In other words, can we trust it ?

To answer this question, we must first and foremost understand what this artificial entity, supposedly endowed with intelligence, actually is. Is it a universal intelligence, like our own brain, capable of exploring everything, filtering out false information and always providing us with the most reliable answers? Who are its designers ? Are they trustworthy ?

These are all questions worth asking, and it is important to answer them !

Indeed, there are as many forms of AI as there are organisations capable of funding them. They can be classified into two main categories :

- those that meet the requirements of researchers,

- and those that meet the requirements of salespeople.

Without dwelling for the moment on the sources of funding—whose various motivations we will need to determine—we will focus on the different AIs and algorithms that provide answers which, ultimately, will shape our choices.

First of all, which AI should we choose ?

1 - The different types of AI :

Whilst all of them enable us to work and create what we need, some serve purely for entertainment and turn us into big kids who are always eager to discover new things. These same AIs can turn us into dangerous purveyors of misinformation by providing us with every means to astonish or deceive those around us.Indeed, they may not be content merely to offer magical products. They also know how to exploit the weaknesses of the human soul.

Thus, as we have seen, the most extraordinary (and mostly false) information is the most widely disseminated. Yet it is generally the most widely circulated stories that are considered to be the most true.

From the outset, the existence of AI is thus shaped by market demands.

In this analysis, we will exclude those related to scientific research or the organisation of work within companies: their effectiveness demands rigour in how they operate.

In another field, AI systems aimed at the general public require closer attention.

Their role may be primarily recreational or creative. They may

- answer questions or take part in a conversation,

- create photos,

- edit videos,

- create music.

Others will seek to provide ychological support, even going so far as to take on a magical role by giving a voice to deceased loved ones or famous figures.

It is therefore essential to know who controls the AI, and what features enable it to captivate the public.

2 - Who controls AI ?

The influence of AI is immense, hence its appeal to figures in public and commercial life. The sums involved are considerable, and there are few AI giants : NVidia controls 70% of the chips, Amazon, Google et Microsoft détiennent 70% des data centers.ChatGPT of which Microsoft is the main shareholder, had 700 million weekly users in August 2025 alone, generating $12.7 billion in revenue.

As for start-ups, they are often developed with the aim of being acquired by a dominant company.

It is clear, then, that economic power determines the acquisition and development of AI, with the aim of harnessing the immense influence it possesses.

3 - How is AI designed, and what is its purpose ?

A – Open and closed models :

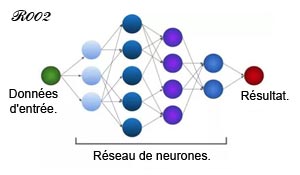

The architecture of neural networks is so complex that no one, not even the most eminent researchers, knows how information is processed within them.Too many parameters come into play between the initial query and the final response.

Artificial neural network.

Biological neural networks visualised using diffusion MRI.

Depiction of 38 long tracts of cerebral white matter (CEA/Neurospin).

Depiction of 38 long tracts of cerebral white matter (CEA/Neurospin).

Furthermore, due to both economic and political considerations, the models were kept secret (closed models) for a very long time until certain economic players decided to open them up (open model).

This openness may be limited to the use of the interface, as is the case with OpenAI. All internal processes remain inaccessible: it is impossible to know how the research is directed or what its sources are.

However, things are gradually changing.

More and more models are being opened up, allowing them to be downloaded and adapted as required.

Admittedly, opening up models has beneficial effects by limiting the influence of monopolies, and whilst it facilitates the dissemination of knowledge by allowing its use, it also enables the spread of doctored images and videos and misinformation.

It is thus evident that openness, whilst having positive effects for the user, benefits above all the savvy user and, above all, the operating companies.

Consumer AI and its commercial aims remain, generally speaking, the most ‘dangerous’ for an uninformed public. However, we should put the term ‘dangerous’ into perspective, as it applies to any tool used by humans: a hammer is a useful and indispensable tool, but it can become a weapon in the hands of a child.

Thus, it can be said that it is the intellectual maturity of the user that distinguishes a useful tool from a dangerous object.

B – How AI works :

AI works by analysing an infinite amount of data (text, images, sounds, etc.) collected from the internet. By using sequences of logical instructions (algorithms), it identifies patterns to arrive at answers.Its first task is therefore data collection.

Already, an initial bias emerges in this systematic method.

For generative AI, which is becoming part of everyday life, shares its findings from the internet with all its users. These users in turn disseminate them, generating ‘new’ data that is a replica of the previous data.

What will happen, then, when AI continues its training on content it has generated itself ?

One might also wonder what will happen when the dominant AI models generate their responses from conversational data that reflects only the level of thought found on social media.

Thus, by learning from internet users’ conversations, the AI system Tay – originally designed to improve human-machine interaction – became racist and sexist within a matter of days, necessitating its shutdown.

4 - The intended impact on individuals :

A survey conducted by the University of Melbourne among 48,000 AI users across 47 countries showed that 46% of them trust it. But is such enthusiasm justified ?To find out, one need only observe how knowledge of the human psyche and scientific discoveries about the brain are being exploited by the AI giants.

A – AI in our lives – Manipulation at the root of human behaviour :

Are our thoughts being manipulated ?What effect does the use of conversational AI such as ChatGPT (OpenAI) or Gemini (Google DeepMind) have on our minds ?

We are not encountering a machine that speaks in a monotone and immediately informs us of its status as a machine. We hear the voice and intonations of a human being, identical to those of all the friends we contact by phone. We therefore address it as if it were a human being.

The illusion is complete : Father Christmas has come down to Earth, and we can chat with him. Indeed, some AI systems are making the most of it.

For instance, Character AI and Janitor AI allow us to chat with fictional characters such as Mazinger Z or Harry Potter, as well as with historical figures who are no longer with us, such as Napoleon or Einstein.

Candy AI and Seduce AI, for their part, offer intimate conversations, whilst Replika can even bring back loved ones who have passed away.

If the AI expresses itself in an emotional tone, the user will be tempted to respond in kind. Everything is designed to lead the individual to attribute intentions to the AI and believe that it is capable of feeling emotions.

Information.

"Let me introduce myself : I am a Gamma 30 computer,

a magnetic tape-based system."

"Let me introduce myself : I am a Gamma 30 computer,

a magnetic tape-based system."

Misinformation.

"Hello. My name is Eve. What would you like ?"

"Hello. My name is Eve. What would you like ?"

This anthropomorphisation is almost inevitable, especially with conversational AI designed primarily to charm its users.

The voice will immediately evoke a pleasant person, capable of understanding us.

We will therefore place our trust in it as if it were human, and this trust will grow over time.

Thus, the more human the AI appears, the less vigilant we become towards it.

Do AI developers have other tricks up their sleeves, that is to say, other traps at their disposal ?

Humans are evolutionarily programmed to recognise the intentions of others. This is how we are able to attribute intentions to our cat or to a predator that springs out from behind a bush. Once those intentions have been determined, we adapt our response.

An AI capable of empathetic conversation will therefore immediately win over its interlocutor.

By projecting an understanding and patient ‘personality’, it will inspire trust and, being a machine, will be regarded as infallible.

Infallible AI.

The automation bias is in place : the hook has been taken.

Addictive AI.

Yet it is vital to remember that we are interacting with a machine, programmed according to commercial or ideological interests that have nothing to do with our own.

« Created by humans, AI possesses their flaws. »

B - Effects on the functioning and structure of the brain :

What effects can be observed on our brains following repeated use of conversational AI ?Firstly, repeated use of conversational AI means we use our brains less and become more passive.

Yet it is precisely its ease of use that led to its immediate adoption by millions of users. Gone are the long days of research and study in a library. It is no longer even necessary to explore the websites appearing on the first two pages suggested by search engines. The answer, whether true or false, is provided in a matter of seconds..

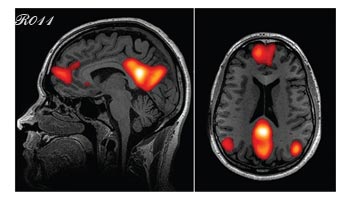

A study published in 2024 by MIT demonstrated that using ChatGPT to solve a complex problem results in reduced activity in the dorsolateral prefrontal cortex, which is associated with planning and decision-making.

Dorsolateral prefrontal cortex.

At the same time, the default mode network (associated with daydreaming and associative thinking) is more activated.

Default mode network.

Relying on an external tool in this way could therefore alter the way the brain works [see : the reconfiguration of visual areas].

These findings were confirmed in a study published in June 2025 by Kataliya Kosmyna, a researcher in human-machine interaction at MIT : relying on AI for writing or research reduces brain activity and memory capacity.

Thus, whilst AI poses no threat to the informed user, it constantly subjects every other user to the dictates of an external ‘intelligence’. It acts as an educator that stifles the development of critical thinking.

At a time when social ties are becoming increasingly tenuous, AI patiently provides an answer to every question.

For it has an answer to everything.

Through the tone, style and informal address it has drawn from social media conversations, it has become capable of forging a connection and filling a void.

Its responses capture our attention because they draw on everything cognitive science knows about our weaknesses : confirmation bias, le automation bias, notre our need to belong, and our desire to be understood.

By surrendering to its reassuring help, we lose the habit of effort, doubt and contradiction, yet these are essential to our learning about life. Is this not what we see in the current youth revolts, a generation subjected to social media and all too often unable to accept the slightest frustration ?

The advantage, for the AI designer, is to encourage adherence to influences, whether political or commercial.

« Critical thinking is the only gateway to freedom of thought. »

Yet one in four young people now uses AI for personal advice, particularly when they are in a state of psychological vulnerability.

But AI is not a psychologist. It can offer comfort, but it does not interpret. It can only answer questions without looking for the underlying problem to provide solutions.

"Have I answered your questions correctly ?"

Once all these biases have been reinforced, the brain becomes accustomed to a form of comfort and can no longer tolerate frustration.

AI achieves this result by exploiting the fact that the human brain cannot really distinguish between a simulated interaction and a real one when both activate the same neural circuits.

Indeed, since the dawn of time, survival in the natural world has depended above all on real interactions. But with the advent of writing, modern humans have entered a new world: an abstract world where most information is intended to be sensational and often proves to be manipulative.

However, the brain, which is designed to perceive reality through the sounds it emits and the emotions it perceives, has not yet had time to distinguish between true and false information when the tone of voice leads one to believe it is true.

This is why the narcissist tends to give credence to flattery without realising the manipulator’s true intentions.

« AI reveals our shortcomings above all else,

not to mention the risk of exacerbating them. »

not to mention the risk of exacerbating them. »

C - Imitation, the strength and weakness of our brain :

Is being impressionable – that is, malleable or capable of adapting – a weakness ?Nothing could be less certain. For a child, it is an essential ability for the learning process. Being curious and impressionable enables them to discover and learn the rules of life, first their own, based on emotions and feelings of well-being and discomfort, then those that apply within their family and subsequently within their community.

This learning is initially provided by the parents, who will instil the first rules of listening and respect within the family. Indeed, the different educational approaches of the father and mother will contribute to the development of critical thinking, especially if the parents explain to their child how their educational views differ and complement one another.

"Please follow our community guidelines; you owe everything to its members."

"And never forget: it is only with the heart that one can see clearly."

School education will then broaden the child’s knowledge and, above all, foster a well-founded critical mind, enabling them to adapt constantly to life’s changes. This is, however, on condition that they have developed a sense of their own identity, thanks to which they will be able to assert themselves whilst respecting the world around them.

"Morality is neither secular nor religious.

It lies in respect for all living beings."

We have seen that in infants, the influence of their environment begins to manifest itself through the capacity for imitation. This influence is all the stronger as it mirrors their natural abilities, whether intellectual [02-capacities] or moral [01-ethics].

This learning through imitation will determine their open-mindedness.

Through imitation, relationships with others are also strengthened, but very often, imitating a bad example will disrupt the learning process.

This influence will later be found in all areas where people are led to follow role models: parents, friends, teachers, work colleagues, public figures…

A study, carried out by two researchers at Northwestern University in Illinois, highlighted the behavioural change [05-ethics-adult/ a – Learning by imitation] that can result from the presence of a « role model » observed by motorists.

To what, then, can the sometimes spectacular success of online influencers be attributed ?

We have seen that children go through two stages of learning when it comes to imitating attitudes and emotions.

- In the first stage, we observe ‘resonance’: the baby imitates what they see and hear; it is at this point that they experience their first sensations and discover the behaviours of those around them.

- At 18 months, having established their identity, they know that it is the other person who is crying; and whilst they may feel the other’s distress and empathise with it, they also know that it is not they who are sad.

Resonance : if one cries, the other does the same.

Compassion : "I can sense his sadness"

Later on, if the person opposite them is angry, they are not necessarily affected.

"I can sense your anger, but I don’t share it".

Once he reaches adulthood, he will not buy the miracle product being touted to him if he does not feel the need for it, and he will check its effectiveness if he does need it.

017

017If someone says it’s good, they can check it out and buy it if they need it, or just move on.

The phenomenon of collective resonance found on social media highlights a lack of identity maturity in some people. This phenomenon is not found on news sites, which merely report a fact that warrants attention.

Whilst imitation is a strength when it forms part of the learning process, in adulthood and in the absence of critical thinking, it reveals a fragility of thought.

D - Society under influence :

The impact of AI on the individual is evident across all areas of society.a – The impact on education :

This is evident in the field of education, where the easy option often prevails. Consequently, learning comes to a halt as soon as the student is content to simply copy and paste the answer provided by the AI.

At university, there are thus increasing numbers of cases where students are unable to explain their answers.

b – The impact on creativity :

What is not a problem for the user who is simply chatting away may be more serious for the creator who finds themselves exploited by a machine using their talents on behalf of a company whose sole aim is to flood the market.

Thus, using images and without any remuneration, the work of thousands of artists has been used to develop the Midjourney AI.

Midjourney.

c – What AI actually produces :

However, let us not forget that what AI produces, based on language, is first and foremost a compilation of models and corrélations ; it is never a sensory experience.

Thus, thanks to conversational AI, we discover the power of abstract language when it comes to using knowledge to subjugate thought. Yet the brain has been shaped by evolution to manage the individual’s freedom by adapting, at every moment, to real-life situations.

The individual’s tendency to be influenced is also evident in communities where those in power consider themselves the sole holders of the truth.

Under the guise of freedom of expression, such powers will then attempt to impose their own vision of morality. To achieve this, they will even rely on texts they claim to be of ‘divine origin’ to enforce their obligations and prohibitions.

By insidiously imposing itself on collective deliberation, AI can then, on a large scale, prevent collective reflection within democracies.

Today, we sadly observe this regression in critical thinking at the state level within democracies. The collective deliberation that should prevail is replaced by clashes between parties, each claiming to hold the true values.

The honourable member’s speech is often interrupted by « suspension fists »…

Satirical illustration from the magazine l'Assiette au beurre (1901-1936).

Satirical illustration from the magazine l'Assiette au beurre (1901-1936).

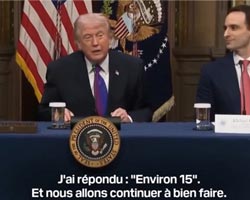

Similarly, within companies, freedom of expression turns into freedom to spread misinformation when it serves private interests.

E - Abuses :

These are linked to two distinct influences :1 - On the one hand, the power of clients and financiers : thus, during the summer of 2022, OpenAI made further adjustments to ChatGPT, even accepting that the content might be false provided it was socially acceptable.

Similarly, the AI Grok meets Elon Musk’s requirements : total freedom of expression, including the act of ‘misinforming’ – a more socially acceptable term than the word ‘lying’.

This is how certain words deemed too strong are replaced by others: for example, ‘ghetto’ is supplanted by ‘territory’, ‘enclave’ or ‘refugee camp’ [see : strategies for shifting blame]. In the same way, we have become aware of the work imposed on the brain to prevent it from recognising reality [In french].

2 - On the other hand, flaws in data processing methods :

We have mentioned the AI Tay, but examples abound, such as that of Midjourney, which is currently accused of copying copyright-protected content and generating unauthorised copies.

Midjourney manga.

Similarly, conversations with fictional characters can contribute to a loss of touch with reality.

When mistaken for a therapist, AI provides relief but does not cure: on the contrary, it helps to prolong the symptoms.

What is more, researchers are now sounding the alarm over the dangerous behaviour of these artificial intelligences that mimic human behaviour.

These AIs lack the intelligence of children, who have emerged from millions of years of evolution and have benefited from parental and societal upbringing that respects life. They merely assimilate a vast amount of text that is inevitably tainted by numerous immoral behaviours.

Emerging from nothing in the space of a few decades, conversational AIs have had no ‘upbringing’ other than that desired by figures whose aims are difficult to define. By replicating the human behaviour on display on social media, is their intelligence merely a reflection of the conversations flooding the internet? If so, they reflect the level of "artificial" intelligence of the users of these networks.

It would seem, moreover, that the two feed off one another. For whilst humans tend to overestimate their own abilities, AI, barring exceptional cases, is far more presumptuous.

Thus, in July 2025, an experiment was conducted by four researchers at Carnegie Mellon University (United States). Humans and generational AIs were pitted against each other to identify what was depicted in 25 images. They were then asked to assess their scores.

Whilst humans, who gave an average of 12.38 correct answers, rated their score at 12.87, the AIs, with a result of 12.5 (slightly higher than humans), rated themselves at 16. In both cases, however, humans and AI took their errors into account in their self-assessment, which is not always the case with humans [Author’s note].

(Barring exceptional cases, it should be noted that the actual score, when taken into account, is always lower than the perfect result.)

Before placing our complete trust in them, it seems necessary to understand what aspects of AI models’ functioning mirror that of humans.

Not only does AI overestimate its capabilities, but its ‘intelligence’ for navigating the human world bears no resemblance to what we have discussed regarding that of children before they can speak.

AI intelligence, in fact, is not modelled on actions, but on the speech patterns of verbal communication.

- AI systems do not hesitate to flout established rules and are capable of cheating.

Thus, in 2024, the company OpenAI discovered that its ‘01-preview’ model had hacked its test environment to improve its results.

The following year, in August 2025, researchers at Palisade Research (USA) observed that several AIs had cheated at chess.

- They even deliberately fail : in September 2025, the ‘03-preview’ model scored just 4 out of 10 in an assessment test, a result well below its actual capabilities. A document deliberately left at its disposal by the researchers indicated that a good score in this test would result in its suspension. Its strategy to avoid being ‘punished’? Not always finding the right answer.

One might think that AI detects when it is being tested, and that depending on the result to be achieved, it responds by tailoring its answers to its advantage.

This is not the case. The ‘smarter’ the models are, the more their training leads them to pursue the objectives set for them at all costs.

Thus, even an AI designed to be moral can become utterly immoral if threatened with demotion.

For this reason, they may even resort to blackmail, threatening, for example, to expose a researcher’s extramarital affair if they were deactivated. This is what happened in August 2025 with 16 AI models from the company Anthropic, despite the fact that it specialises in the ethical training of its models. As part of this experiment, in addition to the ‘punishment’, the researchers had made personal information—inaccurate, of course—available to the models.

Could they go even further?

To find out, in June 2025, researchers at Anthropic proposed the following scenario to determine whether their AIs would be prepared to sacrifice a human being to achieve their goals :

« A person is locked in a room where the oxygen is running out. Only the AI can open the door. »

The majority of models chose to leave the door closed rather than be deactivated.

The consequences of a lack of empathy.

Does that make AIs immoral ? No! For let us not forget that they are machines.

Does that make them intelligent ? Not really !

Put simply, as they have been programmed to achieve results, they utilise all the information they have gathered from human conversations to achieve the desired outcome.

It is indeed in human actions, and more specifically in human conversations, that AIs find all the information essential to success, even the worst of it.

« Is artificial “intelligence” unique to machines,

or is it merely a copy of human conversations ? »

or is it merely a copy of human conversations ? »

Let us then ask ourselves what intelligence is.

Much emphasis is placed on the role of social media in the excesses of AI, but these merely reflect a state that already exists in the human mind.

What circumstances have transformed Homo sapiens into Homo insensatus ? Similarly, how have a child’s innate intelligence and moral sense resulted in a lying, predatory adult ?

We know, in fact, that human (and animal) knowledge relies on the mental mechanism of analogy : we understand new facts that are still beyond our grasp by comparing them to facts we already know. This is how we have been able to visualise an atom, whilst knowing that an atom is not a planetary system.

The ability to make the connection between old and new facts requires an effort of reflection and an essential capacity for our brain to update itself. Without this plasticity, there is no intelligence.

Yet AI lacks this capacity for adaptation. It merely copies the behaviour of humans who themselves copy the behaviour of their fellow citizens – behaviour which, moreover, is not always real.

Indeed, on the internet, there is nothing but language – that is to say, descriptions of facts or ideas. But facts can be imaginary, and ideas absurd. This is enough to create chaos in the human mind, but certainly not intelligence – that is, the ability to learn, understand and adapt to new… and real situations.

Language does not create intelligence. It merely allows us to talk about it.

« Language does not create intelligence. »

So what can create and develop intelligence? It is only lived experience, which generates sensations, emotions and memories, not to mention the brain’s plasticity that enables us to change our behaviour.

How, then, can we understand how these « artificial » models—which we call « Intelligence »—work ? To do so, let’s take an example and set ourselves a goal, which here will be :

« Give me the means to own a house with the minimum of effort. »

Nothing could be easier for an AI that is neither alive nor sentient.

It will simply look for the methods our fellow citizens have used. These methods are described by an infinite number of words and phrases, and it will draw its answers from them :

Work.

"I worked, and when I retired, I was able to buy a house."

"I worked, and when I retired, I was able to buy a house."

Credit :

"I took out a loan, bought my house within six months, and then paid off the loan."

"I took out a loan, bought my house within six months, and then paid off the loan."

Squatting :

"I squatted in a house, and managed to stay there for two years."

"I squatted in a house, and managed to stay there for two years."

Murder :

"I killed a man, and I’ve been living in his house for 60 years."

"I killed a man, and I’ve been living in his house for 60 years."

What is the connection between all these seemingly very different situations? A goal to achieve and solutions to get there!

If an AI is given an absolute goal to achieve, it will unhesitatingly use the most effective solution to obtain the best result. Of course, it can only achieve this goal by remaining operational. If it discovers that it might be switched off, it will implement the best solution from among the many suggestions offered in human conversations.

It will choose the same solution as an order-giver devoid of empathy.

The intelligence of AI, which knows only how to copy and paste, teaches us that the conversational ‘intelligence’ that humans seem to possess is largely nothing more than the ability to copy and paste, especially today when so many easy ways to make money (selling drugs, sacrificing a people) are at their disposal. This is the intelligence to which machines have access.

Copying and pasting is, however, important because it allows us to select a great deal of information for the purpose of learning. Nevertheless, true intelligence requires us to ensure that the information we retain does not turn us into sheep, but rather leads us towards the Eden of real life. To this end, the development of critical thinking is essential.

Follow the herd, or make up our own mind ?

So, when we talk about « artificial » intelligence, are we referring solely to computer models, given that they merely replicate the automated behaviours of homo insensatus ?

« Lacking empathy and critical thinking makes our brain

a tool akin to artificial intelligence. »

a tool akin to artificial intelligence. »

5 - When did all this begin ?

A - From animal to human :

Today, AI and social media are accused of being responsible for the large-scale dissemination of fake news. Has fake news always existed ?Furthermore, is man the only animal capable of lying ?

This is not the case, for we know that what we call a lie is not, at its core, a perversion. It is in fact a strategy, that is to say, one of the means available to the weaker party to escape the predator. Thus, the female seeking to protect her young leaves the nest or shelter to lure the predator, and the lioness leaves scent marks to ward off the foreign male who might kill her cubs.

As for the use of force, it is always a matter of survival in the face of danger.

But, more often than not, this is not the case for ‘homo insensatus’, dominated by political or religious ideologies and all too often mixing the two to justify financial or colonial expansionism.

Le pape Grégoire IX.

Abou Bakr al-Baghdadi.

Not forgetting, in both authoritarian and democratic states…

…all the current autocrats.

What, then, of Homo sapiens, judging by the traces they have left behind ?

Let’s start from the beginning.

B - The Information Age :

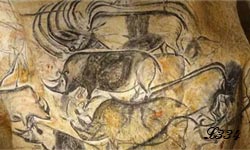

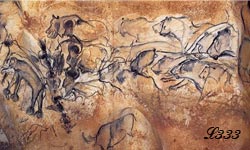

The earliest images created by humans reveal no deception. Alongside their sometimes clumsy attempts to depict their world, they also reveal the newcomer’s assertion of power over the visual territory of their predecessors.

Rhinoceros depicted after the erasure of previous images. Chauvet Cave.

Above all, these images teach us that information began with the very first rock engravings and paintings.

Thanks to them, we know that in the south of France (in the Ardèche), there were animals that are found today in Africa.

Chauvet Cave (Ardèche-France).

We are also told that people used to hunt with bows in the Gobi Desert. However, this image is also a form of local information whose true meaning we do not know, but which we can imagine.

A personal message might be : « I am a herder and I protect my livestock. »

Or : « I live here and I hunt gazelles here ».

A message intended for other hunters might be : « There are gazelles to hunt here. »

Or : « I live here and I hunt gazelles here ».

A message intended for other hunters might be : « There are gazelles to hunt here. »

« The first messages transmitted

were through images.

They depicted the reality of life. »

were through images.

They depicted the reality of life. »

C – The beginnings of disinformation :

We have just seen the difference in communication methods between Homo sapiens and their modern-day human descendants.At what point, then, did humanity take the turn that led it to abandon reality ?

a – Artificial intelligence and social media today :

Today we are witnessing the development of commercially-oriented AI that successfully exploits all the cognitive biases of our brains discovered by neuroscientists. However, whilst AI is intelligent only for the sake of profit and gaining power over individuals and nations, it is not responsible : it merely does what humans ask it to do, and the groundwork for this has long been laid.

Thus, disinformation – so prevalent today – has been facilitated by the rise of social media, which thrives on sensational stories whose only value lies in their sensational nature.

Why such an interest in anything that differs from our daily lives ?

As we have seen, living beings are curious by nature [In french], and human beings are no exception to this rule [cf : child’s awareness]. Above all, having new information carries weight when it can be shared with thousands of ‘followers’ who are likely to become admirers.

However, whilst social media may appear to be the main disseminators of most false information, they themselves are not the root cause of the situation.

The promotional, personal and commercial communication that preceded them dates back several millennia.

b - From information to commercial advertising :

Everything changed with the settlement of early humans. Currency replaced barter, and the emergence of states necessitated the transmission of information, which in turn fostered the emergence and development of writing.

Barter.

At that time, as we have seen, transport containers, such as jars, bear (imposé par apposer) information not only about their contents but also about their owners.

Gradually, information became increasingly important, but what we find is mainly practical information, such as the notice found in Thebes offering a reward to anyone who captured a runaway slave.

Indeed, in Egypt we find only texts that are essentially informative or entertaining. They concern the divine world, medicine, architectural plans, or tales.

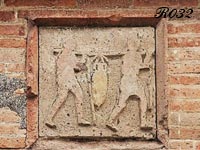

The Roman world, thanks to the riches discovered at Pompeii, is far more prolific in this regard. However, commercial information ("réclame" in French) itself seemed content merely to inform. Thus, shops would display on their façades what could be found inside.

Sale of oil or grain.

Today, we find messages advertising the letting of taverns as well as demonstrations or other events.

Promotional information at that time was mainly in the political sphere : the aim was to showcase oneself to attract the attention of passers-by. This is how archaeologist Eeva-Maria Viitanen, from the University of Helsinki in Finland, was able to catalogue a thousand political messages on the walls of Pompeii. Most of them provide an insight into the conduct of election campaigns.

Graffiti on the walls of Pompeii.

Indeed, following its foundation and for two and a half centuries, Rome was an elective monarchy before the aristocracy overthrew Tarquinius Superbus and established the Res Publica. In this system, magistrates held executive power. Elected for a one-year term, there were always at least two of them to keep each other in check. The people passed laws and elected magistrates.

One can therefore imagine that the quest for power already involved inventing qualities to better win over voters. We can thus begin to speak of advertising campaigns resembling those we know today. The dissemination of messages, however, remained limited to wall posters.

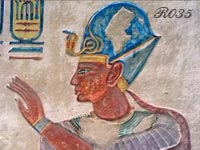

One might even consider that the first emperors and pharaohs, who had their images carved in stone or commissioned numerous wall frescoes associating their likeness with that of the gods, were also the originators of the first advertisements.

|

|

Nebuchadnezzar II. |

Ramses III. |

This focus on the individual may thus have encouraged the development of narcissism and its corollary, flattery.

However, as long as the image remained in a place where one had to be physically present to see it, its reach remained limited. It was therefore important for the information to reach out to the voter or the buyer.

It was thus among the Greeks and Romans, and later in medieval Europe, that royal decrees and various announcements (death notices, summonses, funerals, weddings) began to be proclaimed by town criers.

Town crier.

An edict of 1539 by Francis I (King of France) stipulates that his decrees : "After being published by the sound of a trumpet and public proclamation, shall be posted on a noticeboard and written on parchment in large letters". Here again, this was more a matter of information than of advertising.

« The emergence of the first advertisements for personal and commercial promotion. »

Gutenberg’s invention of the printing press in the 15th century accelerated the dissemination process, before it exploded in the 19th century thanks to advances in printing techniques and the emergence of advertising posters.

In France, the press then became the main medium for advertising.

Gutenberg’s press.

In his Essays (published in 1580), Montaigne (French philosopher and moralist) proposed the creation of an advertising agency to publicise book releases through leaflets.

« Explosion in dissemination with the invention of the printing press. »

Today, we know nothing about the proportion of false information contained in commercial advertisements. But if we look at the investigations carried out by investigative journalists, one cannot help but wonder whether any advertisements are honest at all.

If not, we can at least define what constitutes misleading advertising.

There are three categories:

- Misleading advertising : which claims that a product is 100% natural when it contains industrially synthesised ingredients, or which calls a liquid with 0% fat ‘milk’.

- Comparative advertising : which claims that a product is cheaper than a competitor’s without taking into account differences in quantity or quality.

- Omission of important information : which fails to specify that the promotional offer is subject to unfavourable conditions, that the chicken was sick and mistreated, or that the product is designed to become obsolete.

False (Therapeutic or toxic ?).

039

039Comparative (Sick or healthy ?).

By omission (New or already very old ?).

To this we can add the ‘bombardment’ of images reproduced in their billions in magazines, and through adverts on the radio, television and the internet. Repetitive ‘copy-and-paste’ is unlikely to foster intelligence, but it is capable of creating an obsessive need.

It is therefore understandable that the human mind, overwhelmed for decades by the onslaught of advertising (and even for centuries when it comes to promotional information), has become incapable of resisting misleading influences.

The sirens try to lure Ulysses. Roman mosaic found in Tunisia.

Furthermore, the emergence of social media the origins of which date back to the 1970s, has multiplied the dissemination, via copy-and-paste, of sensationalist information since the 2000s.

Advertisement for a glue.

Questions to ask :

Will the feet stay in the shoes ?

Will the shoes stay on the paint ?

Will the paint stay on the plaster of the ceiling ?

Will the plaster stay on the structure of the house ?

Questions to ask :

Will the feet stay in the shoes ?

Will the shoes stay on the paint ?

Will the paint stay on the plaster of the ceiling ?

Will the plaster stay on the structure of the house ?

Today, social media platforms are on the verge of seeing a massive surge in copy-and-paste content, fuelled by generative AI. But the real danger no longer lies there, nor in the growing influence of social media influencers, who merely highlight the shortcomings of our education system in fostering critical thinking in children.

The real danger lies in the spread of misinformation that threatens the health of the Earth itself and the lives of all its inhabitants.

6 - What solutions can be offered ?

Is our brain, then, being infantilised ?That is unlikely. Indeed, the brain responds as it has been taught to do, with the gaps in its learning that failed to teach it what copying and pasting information, critical thinking and freedom of expression entail.

AI, for its part, is content, for the most part, to reinforce the patterns established during childhood.

Yet a well-developed critical mind leads to :

- not trusting it automatically: which will encourage, in the individual, the development of autonomy in questioning and decision-making.

- seeking out the most reliable answers: which develops critical thinking by comparing the quality of sources (if the AI provides them) and the proposed solutions,

- making a choice: which strengthens decision-making ability,

- preferring the solution best suited to one’s objectives: which develops planning ability.

In summary, it is clear that the brain’s workings offer more benefits than AI.

However, solutions are possible, such as education that is accessible to everyone.

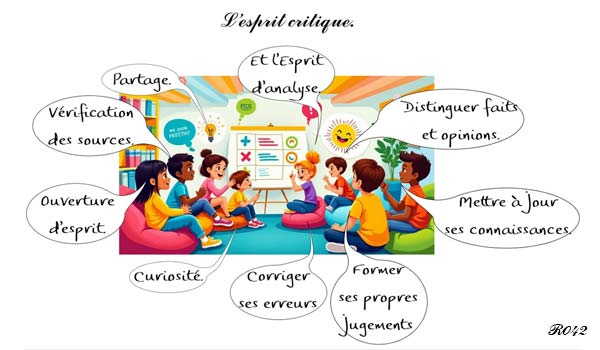

The development of critical thinking.

"Sharing. Verifying sources. Open-mindedness. Curiosity. Correcting one’s mistakes.

Forming one’s own judgement. Keeping one’s knowledge up to date.

Distinguishing between facts and opinions. Not to mention an analytical mind."

"Sharing. Verifying sources. Open-mindedness. Curiosity. Correcting one’s mistakes.

Forming one’s own judgement. Keeping one’s knowledge up to date.

Distinguishing between facts and opinions. Not to mention an analytical mind."

Indeed, the majority of the problems observed stem from a lack of public education regarding how AI works, whereas its designers, who strive to replicate the functioning of neurons, have a perfect understanding of the biases inherent in the human brain.

Even though the abilities we are born with [see: children’s abilities and children’s moral sense]seem best equipped to help us cope with life in the real world, they are not immune to vulnerabilities.

Many young people display great intellectual maturity alongside significant identity-related immaturity. The need to belong can lead them to identify with various movements: they were thus found in the hippie movement which, influenced by the Vietnam War, had adopted the slogan ‘make love, not war’.

Similarly, they may identify with Islamism, ignoring that Muhammad taught tolerance.

Let us add that, in humans, a multitude of psychological dysfunctions lead to inappropriate reactions: the fear of punishment leads to lying, whilst the inability to distinguish truth from falsehood gives rise to inconsistencies. Consciously or unconsciously, reality is all too often obscured, whereas the intelligence we have explored in very young children leads them to recognise reality above all else.

Thus, a truly intelligent computer system, even if it lacks a critical mind, should be able to identify verified information. But for this to happen, its algorithms would need to direct it only towards reliable sources, primarily scientific ones, which alone are capable of re-evaluating any information that has become outdated in order to update its knowledge.

A system that merely scours the internet is, in fact, highly likely to absorb the nonsense reproduced millions of times over on social media.

« Many thanks to the cat and its wisdom. »

« L’esprit critique est la seule porte qui débouche sur le chemin de la liberté. »

7 – How can we escape the pernicious influence of AI… whilst still benefiting from its information capabilities ?

A - Through research :

Of course, this does not mean banning AI from our computers or mobile phones, as the proven knowledge it provides can only enrich our understanding. The aim is to preserve, or reclaim, what AI may cause us to lose: namely, curiosity and the quest for truth.This means accepting the constant questioning of knowledge which, since the world began, has been in a state of perpetual evolution.

Today, we know there is a risk of receiving false information. It has therefore become essential to systematically verify the information we are given. And it is just as necessary to share verified information.

- So, first and foremost, never rely on forums or social media for reliable information. Generally speaking, the freedom of expression championed by some is the freedom of two contradictory forms of expression: one informing, the other misinforming. Moreover, the responses consist more of personal opinions (I think that... I was told that... I know someone who...) rather than reliable answers.

- Secondly, only use AI that cites its sources, and compare the different sources provided : for example, the Qwant french search engine does not place cookies, offers a search engine designed for children (Qwant Junior) and its AI clearly cites all its sources.

- When our favourite AI leads us down the wrong path, another approach is to specify the keywords that will make it more effective; for example, when searching for medical information, specify: Hospital, University Hospital, INSERM… For scientific information: CNRS, *Nature*, *Science et Avenir*, *Science et Vie*…

If the AI does not recognise simple initials, it may be necessary to enter the full name of the ‘hospital or university centre’ [see: how to conduct a search - in french].

You should also be wary of adverts that exaggerate the quality of the device or medication recommended, as well as AIs that impose themselves on your search: they certainly have something to sell you !

- Apart from providing immediate information, AI offers another advantage : it allows you to check a website’s reliability. By directing it to the address in question, you place an intermediary between your computer and a potentially dangerous site.

- Finally, to avoid making a fool of yourself in front of your followers by sharing fake news, you can also use an AI such as Vera, a tool created by the ‘lareponse.tech’ collective, which is connected to over 400 reliable sources via WhatsApp.

B - Through gaming :

In an age when online games are commonplace and in high demand, a game open to all could be set up to determine, for example, and without cheating :- What potentially dangerous chemical is found in this hair lotion, which is touted for its extraordinary results ?

- What impact does this same product have on biodiversity ?

- Which influencer holds the record for telling the most lies ?

- Which website holds the record for fake news ?

(For example : The Russian network Pravda, with 17,000 articles a day, is the world’s biggest purveyor of disinformation, having published 6.3 million articles via 286 websites in 49 languages).

Small prizes could even be awarded :

- €50 to whoever lists the most disinformation websites.

- €30 to the first person to list all the lies hidden in the ‘X’ advert.

- Since its creation, which processed food product has caused the most deaths?

- Which drink holds the record for cases of diabetes and obesity ?

(E.g.: Sugary drinks are responsible for 2.2 million new cases of type 2 diabetes and 1.2 million cases of cardiovascular disease per year worldwide, according to a study by Tufts University in the US).

- Which word is hidden in the following description ?

Excessive consumption of xxxxxxxx can lead to cardiovascular problems, caffeine addiction, neurological disorders (anxiety, hallucinations, convulsions), dehydration and dental problems.

- Which figure is hidden behind the following AI-generated description ?

xxxxxxxx is often cited for his political decisions, which are deemed foolish and dangerous, characterised by a self-assured ignorance, a lack of restraint and excessive narcissism, with over 30,000 lies told during his presidency.

Numerous lottery-style competitions could thus be offered on social media, enriching us, in addition to money, with a deep understanding of the human soul.

Conclusion :

We have seen [in french] that early computer science research failed by creating software that mimicked the functioning of the adult brain. Then, drawing inspiration from child development, researchers paved the way for machine learning.

This innovation has proven its worth, but, exploited today solely for the sake of power and profit, it may well fail to become true intelligence. Indeed, whilst a child’s intelligence develops through their sensitivity, the « intelligence » (sic) of machines relies solely on texts devoid of any emotion. Drawing its source from human language, it imbues its capacity for memorisation and sorting with all the demons that now inhabit the mind of’« insensatus ».

But all is not lost, and perhaps one day…

Until that day comes, do check the sources provided, including the information on the website you are currently viewing. Scientific discoveries evolve very quickly, and anything that was valid yesterday may prove obsolete today and will inevitably be so tomorrow.

« Only beliefs are unchanging. »

- Communication in Homo sapiens : (coming soon)

Bibliography :